Introduction to Fast Scalable Machine Learning with H2O - Plano

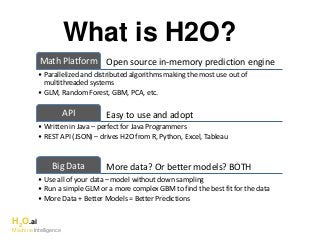

- 1. H2O.ai Machine Intelligence What is H2O? Open source in-memory prediction engineMath Platform • Parallelized and distributed algorithms making the most use out of multithreaded systems • GLM, Random Forest, GBM, PCA, etc. Easy to use and adoptAPI • Written in Java – perfect for Java Programmers • REST API (JSON) – drives H2O from R, Python, Excel, Tableau More data? Or better models? BOTHBig Data • Use all of your data – model without down sampling • Run a simple GLM or a more complex GBM to find the best fit for the data • More Data + Better Models = Better Predictions

- 2. H2O.ai Machine Intelligence H2O Production Analytics Workflow HDFS S3 NFS Distributed In-Memory Load Data Loss-less Compression H2O Compute Engine Production Scoring Environment Exploratory & Descriptive Analysis Feature Engineering & Selection Supervised & Unsupervised Modeling Model Evaluation & Selection Predict Data & Model Storage Model Export: Plain Old Java Object Your Imagination Data Prep Export: Plain Old Java Object Local

- 3. H2O.ai Machine Intelligence Ensembles Deep Neural Networks Algorithms on H2O • Generalized Linear Models with Regularization: Binomial, Gaussian, Gamma, Poisson and Tweedie • Naïve Bayes • Distributed Random Forest: Classification or regression models • Gradient Boosting Machine: Produces an ensemble of decision trees with increasing refined approximations • Deep learning: Create multi-layer feed forward neural networks starting with an input layer followed by multiple layers of nonlinear transformations Supervised Learning Statistical Analysis

- 4. H2O.ai Machine Intelligence Dimensionality Reduction Anomaly Detection Algorithms on H2O • K-means: Partitions observations into k clusters/groups of the same spatial size • Principal Component Analysis: Linearly transforms correlated variables to independent components • Generalized Low Rank Models*: extend the idea of PCA to handle arbitrary data consisting of numerical, Boolean, categorical, and missing data • Autoencoders: Find outliers using a nonlinear dimensionality reduction using deep learning Unsupervised Learning Clustering

- 5. JavaScript R Python Excel/Tableau Network Rapids Expression Evaluation Engine Scala Customer Algorithm Customer Algorithm Parse GLM GBM RF Deep Learning K-Means PCA In-H2O Prediction Engine H2O Software Stack Fluid Vector Frame Distributed K/V Store Non-blocking Hash Map Job MRTask Fork/Join Flow Customer Algorithm Spark Hadoop Standalone H2O

- 6. H2O.ai Machine Intelligence cientific Advisory Council Stephen Boyd Professor of EE Engineering Stanford University Rob Tibshirani Professor of Health Research and Policy, and Statistics Stanford University Trevor Hastie Professor of Statistics Stanford University

- 7. H2O and R

- 8. Reading Data from HDFS into H2O with R STEP 1 R user h2o_df = h2o.importFile(“hdfs://path/to/data.csv”)

- 9. Reading Data from HDFS into H2O with R H2O H2O H2O data.csv HTTP REST API request to H2O has HDFS path H2O ClusterInitiate distributed ingest HDFS Request data from HDFS STEP 2 2.2 2.3 2.4 R h2o.importFile() 2.1 R function call

- 10. Reading Data from HDFS into H2O with R H2O H2O H2O R HDFS STEP 3 Cluster IP Cluster Port Pointer to Data Return pointer to data in REST API JSON Response HDFS provides data 3.3 3.4 3.1h2o_df object created in R data.csv h2o_df H2O Frame 3.2 Distributed H2O Frame in DKV H2O Cluster

- 11. R Script Starting H2O GLM HTTP REST/JSON .h2o.startModelJob() POST /3/ModelBuilders/glm h2o.glm() R script Standard R process TCP/IP HTTP REST/JSON /3/ModelBuilders/glm endpoint Job GLM algorithm GLM tasks Fork/Join framework K/V store framework H2O process Network layer REST layer H2O - algos H2O - core User process H2O process Legend

- 12. R Script Retrieving H2O GLM Result HTTP REST/JSON h2o.getModel() GET /3/Models/glm_model_id h2o.glm() R script Standard R process TCP/IP HTTP REST/JSON /3/Models endpoint Fork/Join framework K/V store framework H2O process Network layer REST layer H2O - algos H2O - core User process H2O process Legend

- 13. H2O in Big Data Environments

- 14. Hadoop (and YARN) • You can launch H2O directly on Hadoop: $ hadoop jar h2odriver.jar … -nodes 3 –mapperXmx 50g • H2O uses Hadoop MapReduce to get CPU and Memory on the cluster, not to manage work – H2O mappers stay at 0% progress forever • Until you shut down the H2O job yourself – All mappers (3 in this case) must be running at the same time – The mappers communicate with each other • Form an H2O cluster on-the-spot within your Hadoop environment – No Hadoop reducers(!) • Special YARN memory settings for large mappers – yarn.nodemanager.resource.memory-mb – yarn.scheduler.maximum-allocation-mb • CPU resources controlled via –nthreads h2o command line argument

- 15. H2O on YARN Deployment Hadoop Gateway Node hadoop jar h2odriver.jar … YARN RM HDFSHDFS Data Nodes Hadoop Cluster H2 O H2O Mappers (YARN Containers) YARN Worker Nodes NM NM NM NM NM NM YARN Node Managers (NM) H2 O H2 O

- 16. Now You Have an H2O Cluster Hadoop Gateway Node hadoop jar h2odriver.jar … HDFSHDFS Data Nodes Hadoop Cluster H2 O H2O Mappers (YARN Containers) YARN Worker Nodes NM NM NM YARN Node Managers (NM) H2 O H2 O YARN RM

- 17. Read Data from HDFS Once Hadoop Gateway Node hadoop jar h2odriver.jar … HDFSHDFS Data Nodes Hadoop Cluster H2 O H2O Mappers (YARN Containers) YARN Worker Nodes NM NM NM YARN Node Managers (NM) H2 O H2 O YARN RM

- 18. Build Models in-Memory Hadoop Gateway Node hadoop jar h2odriver.jar … Hadoop Cluster H2 O H2O Mappers (YARN Containers) YARN Worker Nodes NM NM NM YARN Node Managers (NM) H2 O H2 O YARN RM

- 19. Sparkling Water

- 20. Sparkling Water Application Life Cycle Sparkling App jar file Spark Master JVM spark-submit Spark Worker JVM Spark Worker JVM Spark Worker JVM (1) (2) (3) Sparkling Water Cluster Spark Executor JVM H2O (4) Spark Executor JVM H2O Spark Executor JVM H2O

- 21. Sparkling Water Data Distribution H2O H2O H2O Sparkling Water Cluster Spark Executor JVM Data Source (e.g. HDFS) (1) (2) (3) H2O RDD Spark Executor JVM Spark Executor JVM Spark RDD

- 22. How H2O Processes Data

- 23. Distributed Data Taxonomy Vector H2O.ai Machine Intelligence

- 24. Distributed Data Taxonomy Vector The vector may be very large (billions of rows) - Stored as a compressed column (often 4x) - Access as Java primitives with on-the-fly decompression - Support fast Random access - Modifiable with Java memory semantics H2O.ai Machine Intelligence

- 25. Distributed Data Taxonomy Vector Large vectors must be distributed over multiple JVMs - Vector is split into chunks - Chunk is a unit of parallel access - Each chunk ~ 1000 elements - Per-chunk compression - Homed to a single node - Can be spilled to disk - GC very cheap H2O.ai Machine Intelligence

- 26. Distributed Data Taxonomy age gender zip_code ID A row of data is always stored in a single JVM Distributed data frame - Similar to R data frame - Adding and removing columns is cheap H2O.ai Machine Intelligence

- 27. Distributed Fork/Join JVM task JVM task JVM task JVM task JVM task Task is distributed in a tree pattern - Results are reduced at each inner node - Returns with a single result when all subtasks done H2O.ai Machine Intelligence

- 28. Distributed Fork/Join JVM task JVM task task task tasktaskchunk chunk chunk On each node the task is parallelized using Fork/Join H2O.ai Machine Intelligence

- 30. H2O on Storm (Spout) (Bolt) H2O Scoring POJO Real-time data Predictions Data Source (e.g. HDFS) H2O Emit POJO Model Real-time StreamModeling workflow

Notes de l'éditeur

- Data never goes into or through R R tells H2O what to do R has a pointer to the big data that lives in H2O Operations on the R object are intercepted using operator overleading and forwarded to H2O via the REST API H2OFrame object in R is a proxy for the big data in H2O R: df= H2O.imporrtFile(“hdfs://path/to/data.csv”) h2o>_df is an H2OFrame object in R

- Data never goes into or through R R tells H2O what to do R has a pointer to the big data that lives in H2O Operations on the R object are intercepted using operator overleading and forwarded to H2O via the REST API H2OFrame object in R is a proxy for the big data in H2O R: df= H2O.imporrtFile(“hdfs://path/to/data.csv”) h2o>_df is an H2OFrame object in R

- H2O MapReduce is not Hadoop MapReduce For more details, go visit cliff in his Hacker corner.

- Striking similarity to hadoop (1) User submits App to Spark cluster Master node (2) App distributed to Spark cluster Worker nodes (3) Spark Executor JVMs start for App (4) H2O instance starts within each Executor JVM (5) App’s Scala main program runs Once scala program drives both your H2O and Spark workloads

- Use Spark SQL to read data into a Spark RDD Convert Spark RDD to H2O RDD; H2O RDD is column-based and highly compressed (Not shown) Run modeling and prediction workflows with H2O (3) Convert H2O RDD (e.g. predictions) back to Spark RDD